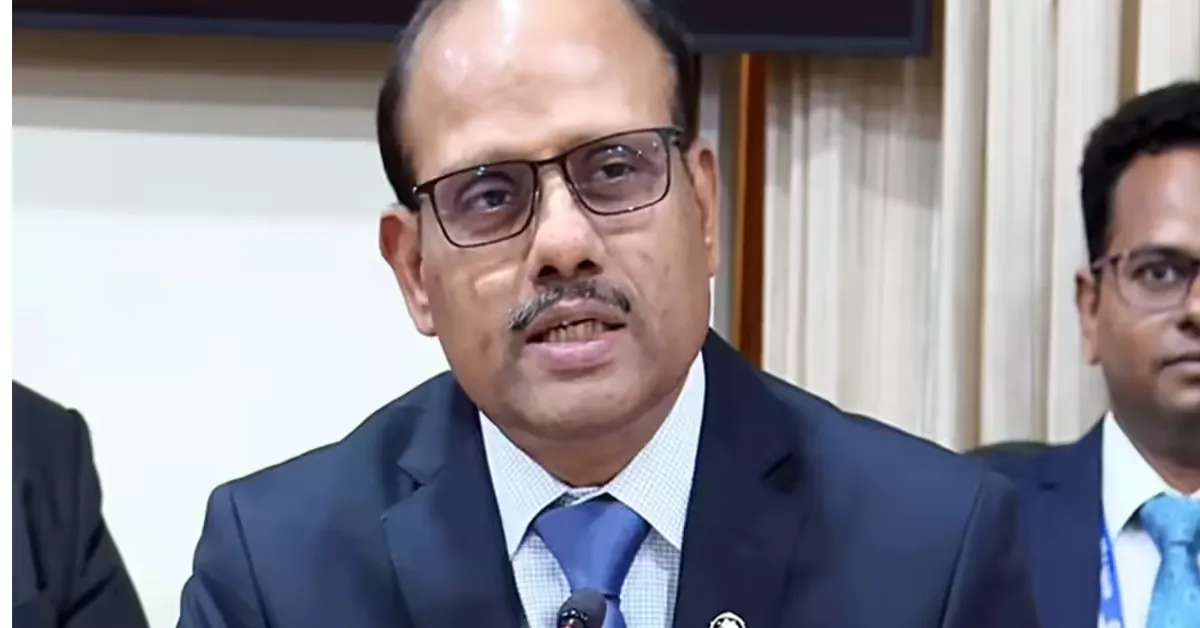

Artificial intelligence (AI) is no longer a concept of the future in the financial sector. It is already here, reshaping how banks, non-banking financial companies (NBFCs), and other financial institutions operate. From processing customer requests to analysing credit risk and detecting fraud, AI systems are rapidly becoming part of everyday financial services. Yet, this transformative technology is not without serious risks. The Reserve Bank of India’s Deputy Governor, Swaminathan J, recently sounded a cautionary note about the unchecked adoption of AI in finance warning that relying too heavily on opaque AI systems could deepen existing vulnerabilities and create new ones, potentially threatening trust and stability in the financial system. His remarks offer a timely reminder that technological progress must be matched with careful governance and ethical oversight.

In this blog, we take a deep dive into what Swaminathan said, why opaque AI systems are a concern in finance, the risks they can pose, and how regulators and financial institutions can respond responsibly to this technology’s opportunities and pitfalls.

AI in Finance: Promise and Peril

Artificial intelligence has enormous potential to improve financial services. At its best, it can automate routine tasks, enhance fraud detection, personalise customer services, reduce operational costs, and even extend credit to underserved segments of society. For example, AI systems can analyse transaction data to identify credit-worthy individuals who might lack traditional credit scores, thus helping expand financial inclusion. They can also sift through large volumes of data to spot unusual patterns that human analysts might miss, improving risk management and compliance oversight.

Despite these clear benefits, Swaminathan underlined that AI is “a double-edged instrument.” The speed of AI adoption is remarkable, but without safeguards, the same technology can amplify existing weaknesses or create entirely new vulnerabilities in the financial sector. The key, he suggests, is not simply whether finance becomes more intelligent, but whether it remains fair, accountable, inclusive, and humane.

Opaque AI Systems: What Are They?

One of the central concerns raised by Swaminathan is the issue of opacity in AI systems. When financial institutions use AI models, especially advanced machine learning or deep learning models, much of the internal decision-making process can be hidden from view even from the people who deploy or rely on them. These “black box” systems make decisions based on complex patterns in data without offering any clear explanation of how those decisions were reached.

In finance, such opacity can be particularly harmful. Decisions made by AI systems may directly affect a person’s economic life: approving or rejecting a loan application, determining credit limits, or setting insurance premiums. If a customer’s loan is rejected because of a model’s internal logic that neither the bank nor the customer understands, then trust is undermined and accountability is lost. Swaminathan stressed that finance cannot become a black box, and that decisions impacting individuals must be explainable and defensible.

Bias and Unfair Outcomes

Another major concern is bias. AI systems learn from data often historical data and that data reflects past behaviour, structural inequalities, and existing biases in society. If these imperfections are embedded in training data, AI models can reproduce and even amplify them at scale. For example, credit assessment models trained on biased data might unfairly deny loans to certain demographic groups, reinforcing exclusion rather than promoting fairness.

Swaminathan placed bias and unfair outcomes high on his list of risks. He warned that financial institutions must be aware that historical patterns captured by AI may not always reflect equitable or ethical criteria for evaluating customers. If a model’s logic perpetuates exclusion or unfair treatment, the outcome may be just as harmful or more so than manual human prejudice.

Data Privacy and Misuse

AI systems rely on massive amounts of data to function effectively. In finance, this often includes highly sensitive personal and financial information. Swaminathan pointed out that in the age of AI, data privacy and governance cannot be treated as secondary concerns. Financial institutions must think seriously about consent, storage, access controls, sharing, and purpose limitation of data they use.

Without robust governance, there is a risk that AI systems could misuse data or expose it to breaches, misuse, or unauthorised access. The more institutions rely on data to train sophisticated models, the greater the potential harm if that data is mishandled. This challenge becomes even more pronounced when AI models use alternative data sources that fall outside standard regulatory scrutiny, such as social media indicators or behavioural patterns.

Model Risk and Systemic Vulnerabilities

Swaminathan also highlighted another technical challenge: model risk. This refers to the danger that a model may be built on flawed assumptions, incorrect data distributions, or overly simplistic logic that fails under real-world conditions. When such models are deployed at scale within financial institutions, errors or misestimations can have ripple effects across portfolios, customers, and entire markets.

Additionally, if multiple institutions rely on similar vendor solutions, datasets, or algorithms, a localized model error can quickly become a systemic issue. The interconnected nature of financial institutions means that vulnerabilities can propagate, creating broader risks than those seen in isolated cases. This interconnected risk underscores why regulators are paying close attention to AI’s systemic implications.

Cyber Security Threats

The use of AI also introduces new forms of cyber risk. Swaminathan warned that cyber attackers could exploit AI itself as a tool to craft more convincing phishing campaigns, automate malicious activities, or identify weaknesses in systems. As financial services digitalise rapidly, the boundary between operational risk and cyber risk blurs. New threats can emerge not only from system failures but from deliberate exploitation of AI vulnerabilities.

Ensuring robust cyber-security frameworks and continuous monitoring becomes vital when AI is deployed. The ability of an AI system to learn and adapt should be harnessed for defence as well as offence — but only if institutions build the necessary safeguards.

The Imperative of Explainability and Human Accountability

A recurring theme in Swaminathan’s remarks is the need for human accountability. While AI can support decision-making, it should not replace the responsibility that ultimately lies with human professionals and institutions. Decisions with significant economic impact cannot be justified by pointing to a machine’s verdict. Rather, financial institutions must be able to explain why an AI model produced a particular result, and who is accountable for overseeing those outputs.

Explainability is a growing area of research and practice within AI governance. Explainable AI (XAI) aims to make models more transparent by providing understandable insights into how a decision was reached. This is crucial in sectors like finance where regulatory compliance, fairness, and consumer understanding are non-negotiable requirements.

A Balanced Approach: Innovation with Prudence

Importantly, Swaminathan did not dismiss the value of AI. On the contrary, he acknowledged that when used responsibly, AI can make financial systems more efficient and inclusive. It can help reach underserved customers, deliver faster services, and enhance risk detection. The problem lies not in the technology itself but in how it is adopted and governed.

Swaminathan urged financial institutions and regulators to strike a balance to embrace innovation without being blinded by hype, and to remain vigilant without retreating into fear. The goal is to make finance more intelligent but not less human; more digital but not less accountable; more inclusive but not less prudent. These principles form a roadmap for responsible AI adoption in finance.

Moving Forward: Regulatory and Institutional Readiness

The risks highlighted by Swaminathan are not unique to India; they reflect global concerns about AI governance in finance. Regulators worldwide, including central banks and financial authorities, are increasingly looking at frameworks to ensure responsible AI deployment. India, too, is exploring ways to enhance data governance, strengthen institutional capabilities, build audit-ready systems, and ensure that AI supports financial stability and consumer protection.

For financial institutions, this means investing not just in technology, but also in organisational capacity, ethical frameworks, and governance structures. Technical excellence must go hand-in-hand with ethical oversight and regulatory compliance.

Conclusion

Artificial intelligence holds immense potential to reshape the financial sector for the better. But as Deputy Governor Swaminathan J of the Reserve Bank of India has made clear, this technology also carries significant risks if adopted without adequate safeguards. The rapid pace of AI adoption should not come at the expense of fairness, transparency, and accountability.

The challenge for policymakers and financial institutions today is not to slow down innovation, but to ensure that progress does not erode trust. Responsible AI is not merely about deploying advanced algorithms; it is about building systems that are ethical, explainable, and aligned with human values. Only then can the financial sector harness the true potential of AI while safeguarding the stability and integrity of the system on which millions of people depend.